In this post I talk about a few papers about non-standard robot embodiments I found a delight to learn about. If you’re a little fatigued by the current humanoid hype cycle like me and want a brief distraction then this is a read for you.

Why the fatigue?

There is a LOT of hype. So many startups that you can’t even comfortably fit all the companies on a map for China. Bank of America forecasts 3 billion clankers by 2060. We’re talking predictions of smartphone level of adoption.

Now don’t get me wrong I’m not against humanoids. I get it - the world is built for the human form factor, etc. Once they cross a cost/intelligence threshold I’m definitely getting one to abuse.

But I’m a bit exhausted from all the demos of these constipated stoners “cleaning” set piece rooms that are already in a better state than my living room has ever been in. I needed a break. And then I came across this post about RoboPanoptes “the all seeing robot”. It was so refreshing to see someone just go “why not?”.

I started looking around for other unconventional embodiments. And you know what? There’s a lot more to robotics than replicating feeble human-like bodies.

Robots that have jobs - quadrupeds

The closest thing to humanoids in adoption is man’s best friend - the quadruped. And robot dogs are not speculative; they already have jobs and not just demos in mockup homes. Boston Dynamics has been selling its Spot model for years now and they’ve been successfully deployed for research, remote inspection and hazardous surveillance type work (tunnels, mines).

The Spot robot exploring Kidd Creek Mine, Ontario.

If you ever had to get up early after a late night you know that rolling comes easier than attempting to walk. Well that’s generally true in locomotion - wheels are much more energy efficient than using motors to lift individual limbs. But legged locomotion makes traversing rocky terrible terrain and stairs possible. So why not just attach wheels to the dog’s legs?

The first time one of these got an actual “wow” out of me was when Unitree released their B2-W dog promo video.

Unitree B2-W dog wheeling it down in the water at high velocity. I come back to this video every once in a while to admire how far ahead China is.

Wheeled quadrupeds as a thing aren’t exactly new - ETH Zurich showed this with ANYmal back in 2017. But these wonderful affronts to evolution became commercializable recently because we’ve hit a couple inflection points on hardware becoming more capable/cheaper, RL training tractable, among others.

Legged quadrupeds on wheels are becoming a much more common sight. There’s companies like Pudu Robotics and RIVR (Swiss successor to Anymal) who use them for deliveries.

A RIVR delivery dog climbing stairs with cargo strapped on its back.

This form factor actually makes a lot of sense for delivery - on flat surfaces they’re really efficient because it’s wheeled locomotion. But they can also conquer something untenable to mobile platforms of the past - stairs.

Eldritch horrors in the lab

OK attaching wheels to legs makes perfect sense and is an ingenious solution to the logistics problem. But can we stretch our imagination further? Take more inspiration from nature and bend the rules some more to solve other problems? Let’s take a look at some recent works that challenge the status quo.

Many eyes

I started rethinking robo embodiments when this paper hit my feed. This awakened a child-like wonder I had lost. Why not put a bunch of eyeballs on an arm? Why limit ourselves? You basically just append more numbers to the feature vector. I do believe this type of thinking is increasingly necessary in the coming years with the availability of highly capable LLM-driven agents. Once the technical details become less important the limiting factor is us - the human in the loop - and our imagination, or lack thereof.

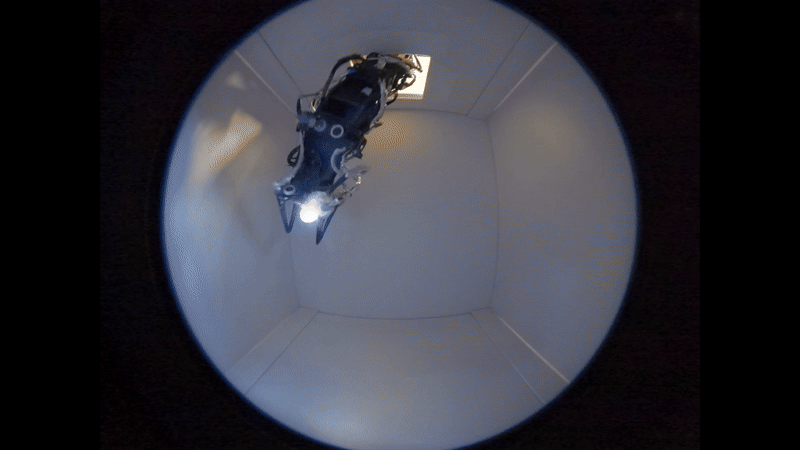

Screengrab from Xiaomeng Xu's blog post introducing this menace. Watch it creep into an enclosed box through a small opening, exploring with its many eyes.

If you’re into Greek mythology Panoptes might sound familiar - the name is an inspiration from Argos Panoptes the all-seeing giant, usually depicted as a being with many eyes on his head and/or body. Equivalently RoboPanoptes is the all-seeing robot with, you guessed it, many cameras on its body.

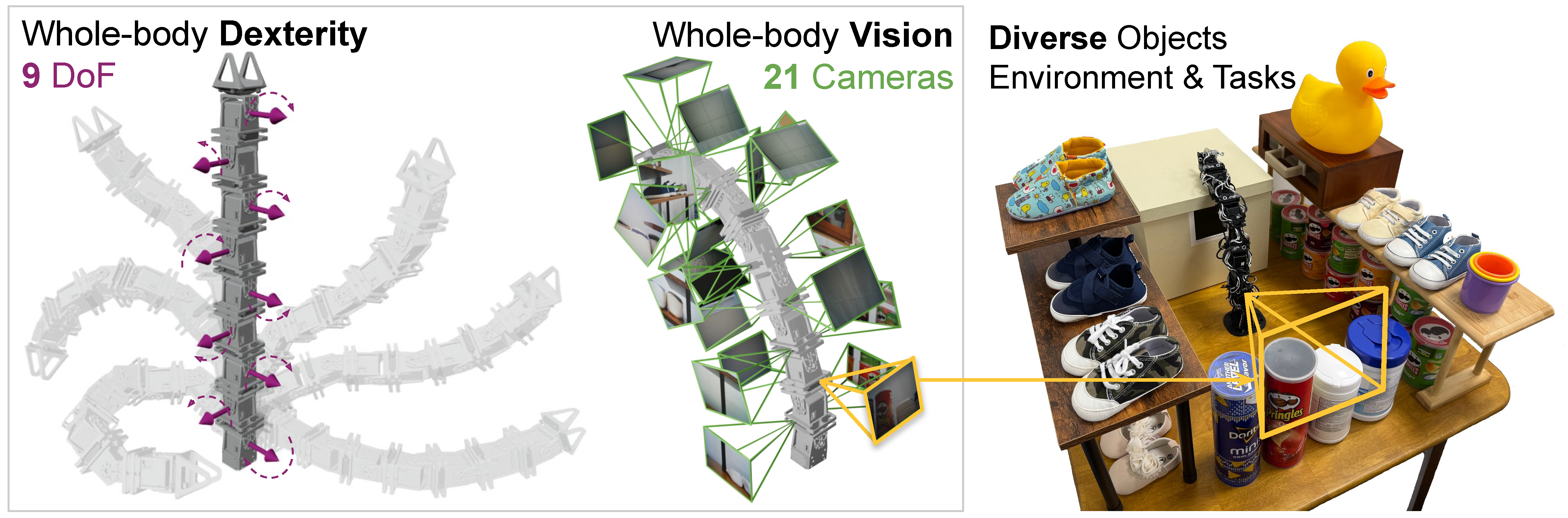

RoboPanoptes overview: 9 DoF body and 21 cameras.

The Panoptes body is built out of modular sections. Each segment is 1 servo + 2 cameras, except the head module with 5 cameras (4 of which are pointing backwards to compensate for blindspots). You can see in the screengrab from the paper the “head” camera is looking forward.

Full-body dexterity

Most industrial arms today are end-effector controlled. We only care where the hand/gripper is in space. Our goal is to displace, or manipulate an object somehow using the end-effector. But in real life we will often use our whole body - while holding your car keys in one hand, and a snack in another, you can still use your forearm to sweep incriminating documents into a paper shredder and switch it on with your foot because you’re in a rush.

Example of whole-body dexterity: autonomously clearing 10 cubes in one sweep.

In this work they demonstrate RoboPanoptes doing various whole-body tasks like tidying up a desk by using its entire arm to sweep all the little blocks in one motion. If this were traditional end-effector control the policy would default to pick-and-place of each individual item, which would be significantly less efficient.

Blink training

I really liked reading about the engineering challenges they faced. Because they were using 21 tiny Adafruit USB cameras they constantly ran into bandwidth limitations and synchronization issues. Remember, this is a 3D printed arm with inexpensive camera modules that need to fit on the small form factor. They reported a frame drop rate of 4.4%! This means each time step has a ~61% chance of missing a camera frame.

To overcome this and add robustness to the robot policy they introduced a new training strategy they coined “blink training”. During training this works by randomly picking a random input camera frame and blanking it out. Kinda like how we apply dropout for regularization, but instead of turning off neurons it turns off camera inputs. A creative and effective way to overcome hardware limitations with software innovation.

Imitation learning

You can’t use standard end-effector control to teleoperate a robot arm meant for full-body dexterity. You need the ability to treat the whole body as an end-effector - so they made a replica leader robot.

Teleoperation of the robot is done by manually manipulating a leader arm.

Now this approach is a little suboptimal because obviously a human operator manipulating a 9-DoF snake in real-time won’t produce an optimal policy for this embodiment. This is a shortcoming of imitation learning - it mimics what the human does based on what the human sees, it doesn’t optimize for what the robot is capable of.

I really hope in the future to see some follow up work that would incorporate reinforcement learning. What kind of emergent weird behaviors would we see from an arm covered in eyes?

Tentacle arms

Soft robots is a whole area of research where we can find robots inspired by octopuses, snakes, elephant trunks, etc. SpiRobs is an example of a tentacle-like robot arm based on a logarithmic spiral design. They used the same principle to print a miniature arm (tens of centimeters), a 1 meter long tentacle and a cluster of tentacles to use as a gripper. The miniature arm is so precise it can grab an ant without hurting it. I recommend watching the youtube video they have some really cool demos, even attaching the arm to a drone.

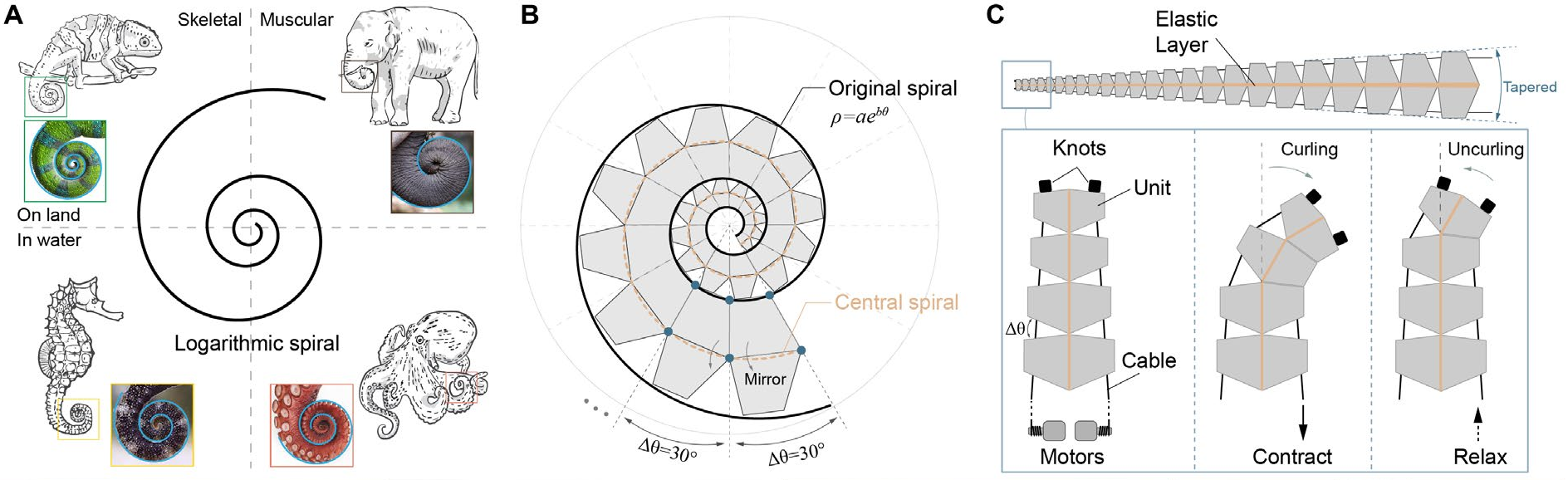

Overview snapshot of multiple spirobs arms. The tiniest one can grab an ant with the larger ones able to pick up stones and whole potted plants.

Spiral design

The spiral design draws inspiration from nature - many lifeforms, regardless of scale, share a similar spiral shape in their appendages (like the tail of a chameleon). It’s a design pattern that keeps coming up in nature, like hexagons that are bestagons or the golden ratio.

Screengrab from the SpiRobs paper showing the animals it was inspired from and a more detailed view of the design.

Each arm starts as a spiral with mirrored sections and spacing between each unit to allow unencumbered curling and uncurling. All parts were 3D printed and connected with an elastic band and a couple of cables connected to a pair of motors. The motors apply antagonistic force and drive the movement of the arm.

Grasping strategy

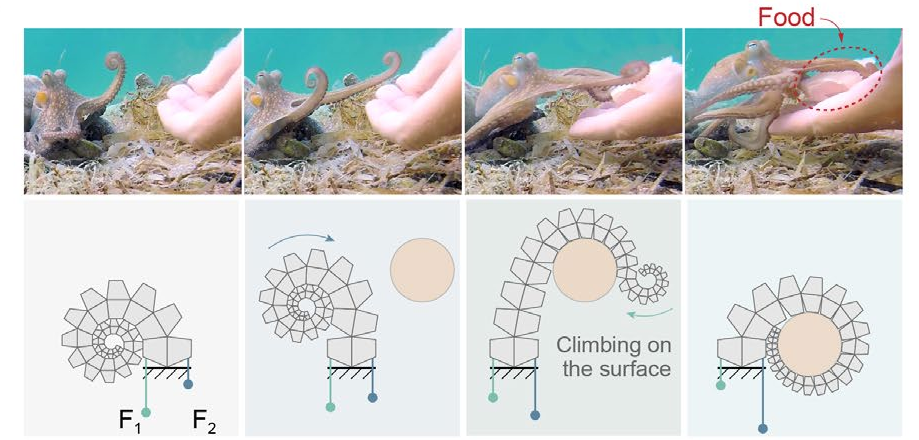

Surprisingly, there’s no ML, no fancy policies for controlling this thing. They engineered a grasping solution that mimics the octopus. By applying and reducing force on the two cables in stages - i.e. packing, reaching, wrapping and grasping, the arm can effectively entangle any object within its range.

The upper figure shows how an octopus reaches for its food by curling and uncurling its arm. The spirobs arm mimics this by using 2 motors and applying force on each cable in a specific sequence.

They can steer the direction of the tentacle by calculating how much force to applying in the initial packing phase when the arm is fully curled. The neat part about this arm is that you don’t need to be precise - just the general direction is enough to wrap most objects. This can be useful for objects that move for example, as long as the tentacle makes contact and has enough length left to wrap around.

Soft grippers in general are especially useful in manufacturing or warehouse environments where the robots need strong yet gentle grip to carry around intricate and fragile items. I’d like to imagine future warehouses will have a lot of tentacle-handed robots running around.

Bio-hybrids

Some researchers are trying to incorporate biological parts into the robot. Why? One of the motivations is that fleshy bits are less prone to breakage because they can self-heal. They’re also somewhat more energy efficient.

Fungal mycelia

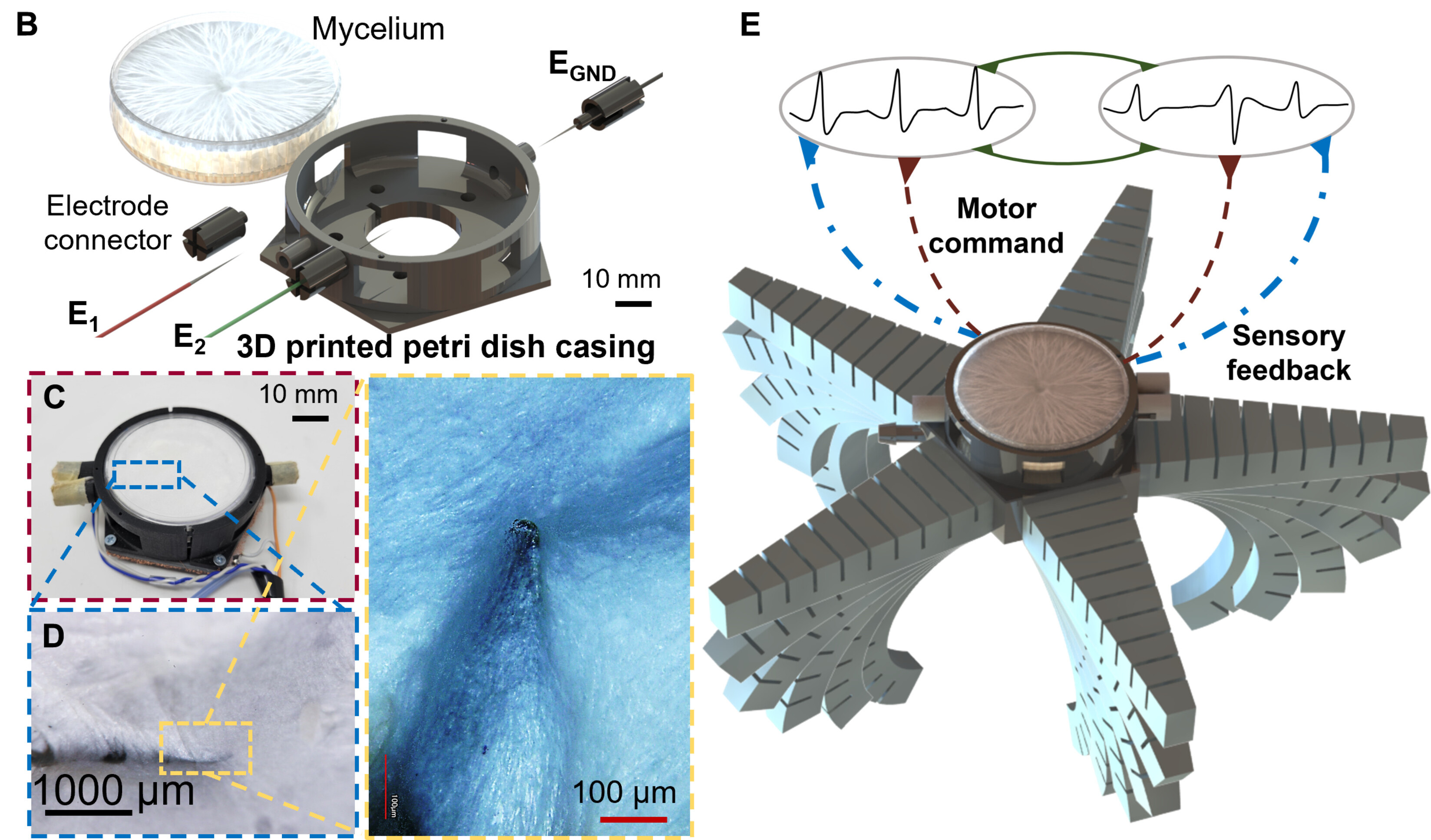

There was a 2024 paper from Cornell University where they grew fungal mycelia straight into some electrodes and used its measured electrical activity to drive a small 3D printed starfish-like robot. Mycelium is basically the underground root network of the mushroom, responsible for sensing, communicating and transporting nutrients/water. They chose fungi because it’s much easier to culture than animal cells in large quantities.

Figure from the paper showing the mycelium along its petri dish/electrodes and the 3D printed soft robot body.

How fungus controls the robot

They kept the mycelium in a Faraday cage for 30 days measuring ambient voltage values. Without stimulation the mycelium produced spikes of electrical signals averaging roughly 126μV (tiny!) with intervals of around 0.6 seconds. Once they could detect those, with the addition of some signal processing they got it to drive a soft robot.

The robot control pipeline. Electrical signals recorded from the mycelium are cleaned up and converted into PWM signals that switch a solenoid valve, modulating air pressure in the robot’s pneumatic soft actuators.

A cool concept I learned from this paper is central pattern generation (CPG). Animals have these as neural circuits in their brainstems to produce rhythmic motor patterns for things like walking, breathing, etc. The mycelium acts like a CPG when exposed to UV light.

The mycelia can sense things other than just light and they’ll be trying to leverage that in areas like agricultural monitoring. As unsettling as bio-hybrids are the self-healing aspect is quite compelling and they could be quite beneficial as long-lasting autonomous monitoring systems living in harmony with the plants and crops.

Specialized bots at work

There’s a long-standing specialist vs generalist debate when it comes to robots. Surely generalists will come but many prominent figures like Ken Goldberg predict that it won’t happen in the next few years. I have a feeling that there will always be a need for specialists for a variety of tasks and environments the human form factor is simply unsuitable for.

Quadrupeds probably could’ve gone here as well but to me they feel more like a weird mix between generalist and specialist. So for this section I’ll just rattle off a couple examples of robots that specialize in very specific niches that I found interesting.

The arachnitects

Recently a startup called Crest Robotics in Sydney (of course it had to be Australia), created a giant spider named Charlotte for the purposes of 3D printing houses on the moon. Charlotte is said to work at the speed of 100 bricklayers, which if they deliver is pretty impressive.

Render of Charlotte secreting a house much like a spider would a web.

It makes sense to use one of these on the moon - many legs can handle various terrains, it can also dynamically adapt its building height and doesn’t need any scaffolding because it’s a spider.

The gentle invaders

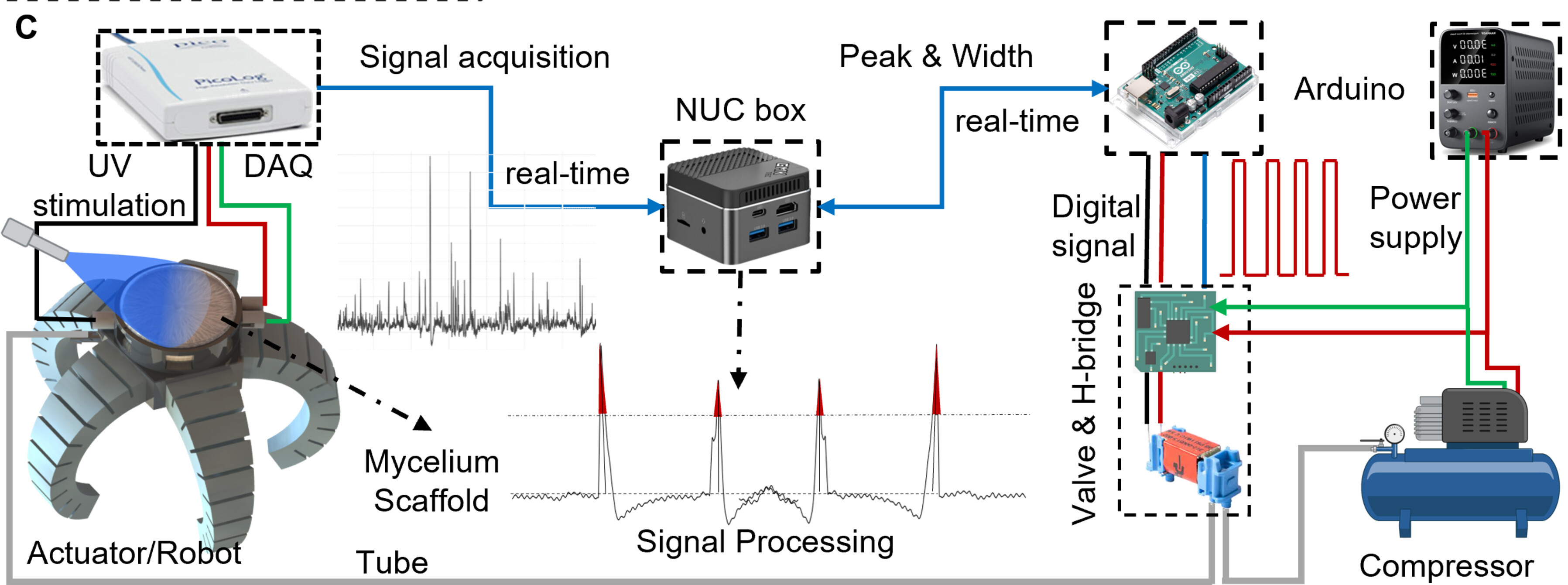

A Brooklyn-based startup Pliant Energy Systems created a beautiful amphibious robot that propels forward only using a pair of fins. It’s capable of both crawling on the floor and swimming underwater. The fins give it really good maneuverability in all directions and instantaneous way of changing directions.

C-Ray swimming in water and crawling like a millipede on land.

A unique thing about this embodiment is this nature cohabitation. Because it moves using mantis ray type wings it’s less prone to damage plants. And no sharp propellers means little fishies don’t turn into paste for fried fish balls. This is exactly what you want from a non-invasive (or minimally invasive) exploration robot.

Hull-huggers for maintenance

One place where non-generalists are dominating and likely to continue are autonomous inspection robots for structures where it would be too dangerous or impractical for humans. Lukas Ziegler had a nice thread summarizing how big of a deal inspection & maintenance bots will be.

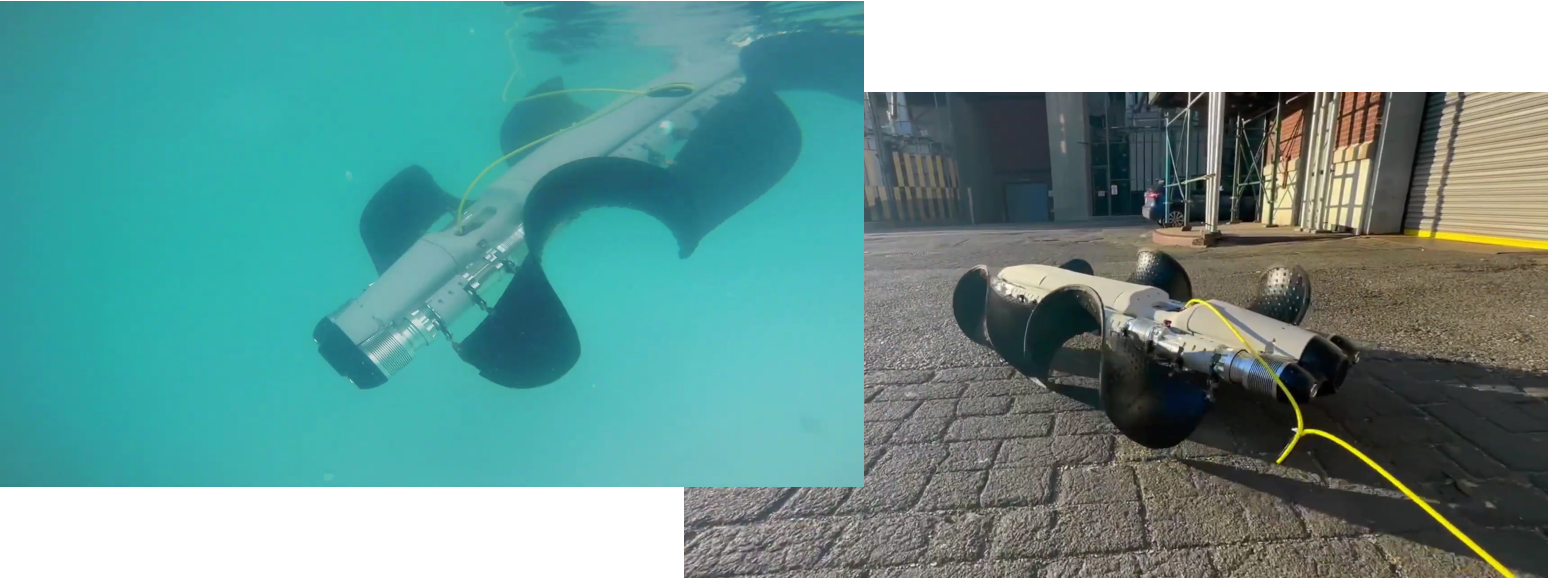

Gecko Robotics built a magnetic wall-climbing robot that crawls over steel and scans it with ultrasound. This is useful for crawling around pipelines and ships to pre-emptively flag up need for maintenance. In fact they recently got a $71 million deal with the US Navy to deploy their crawlers for ship mainteance.

Gecko Robotics Cantilever crawler robot clinging to a ship's hull.

Going into pipes and various underground infrastructure is another area we want tiny specalists for. Though if they came up with 15cm tall humanoids I’d be down for teams of tiny guys going into pipe inspection missions. More practically, we’ll see more crawlers like the one from Tiny Inuktun.

Tiny Inuktun crawler robot launching deep into the pipes.

Technically we could strap some climbing shoes or swimming fins that divers use to a humanoid robot. But at least in the near term while they’re still trying to figure out pick & place, it makes a lot of sense to have tiny specialists.

Closing

Generalists will be a huge unlock, but we’ll always need specialists, “freaks” of all shapes and sizes for tasks perhaps we haven’t imagined yet.

As AI tools evolve, robot embodiments are likely to become a design space, not a constraint. Personally, I’m looking forward to when we’ve conquered the digital and can start “vibe coding” physical solutions too.

TTFN